Understanding K-Nearest Neighbors

Supervised Machine Learning: K-Nearest Neighbors (KNN)

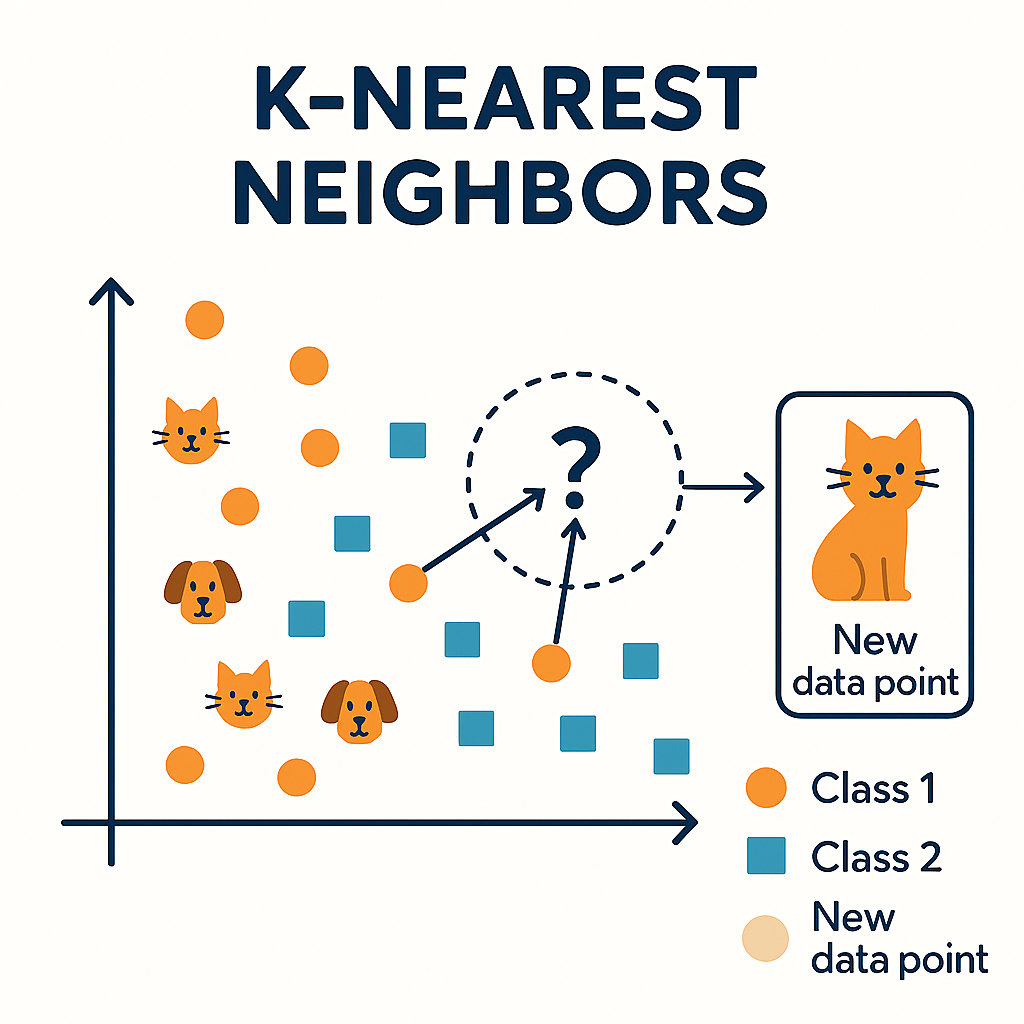

K-Nearest Neighbors (KNN) is a simple yet powerful supervised machine learning algorithm used for both classification and regression tasks. Instead of learning an explicit model, KNN makes predictions by comparing a new data point to the k most similar examples in the training set, typically using a distance metric such as Euclidean distance. The majority class (for classification) or the average value (for regression) among these neighbors determines the prediction.

Because KNN is a lazy learner, all computation happens at prediction time, which makes it easy to implement but potentially expensive for large datasets. It is highly intuitive, works well with well-separated classes, and can capture complex decision boundaries when combined with appropriate feature scaling and a carefully chosen value of k. However, KNN can be sensitive to noisy data, irrelevant features, and the curse of dimensionality, so preprocessing and feature selection are often crucial for good performance.

Key aspects of KNN include:

- Choice of k: Small k can lead to overfitting and noisy decisions, while very large k may oversmooth boundaries and underfit. Common practice is to tune k using cross-validation.

- Distance metric: Euclidean distance is standard for continuous features, but other metrics (Manhattan, Minkowski, cosine) may work better depending on the data.

- Feature scaling: Normalization or standardization is essential so that features with larger numeric ranges do not dominate the distance calculation.

- Weighted neighbors: Giving closer neighbors higher weights can improve robustness and accuracy.

- Applications: Pattern recognition, recommendation systems, anomaly detection, and any setting where similarity between examples is meaningful.

Despite its simplicity, KNN remains a strong baseline and a valuable tool for understanding distance-based learning methods.

Supervised Machine Learning: K-Nearest Neighbours (KNN) — Layman’s Explanation

Imagine you walk into a new neighbourhood and want to decide whether a house is expensive or cheap.

You don't know the price of this new house, but you look around at the houses near it and see:

-

What are their prices?

-

What types of houses they are?

Then you simply copy the majority opinion of the nearby houses.

That is KNN — Look at your nearest neighbours and follow what most of them say.

KNN predicts something for a new item by checking the "K" closest items in its training data and choosing the most common outcome.

No maths. No training. Just comparing with past examples.

🧩 Real-Life Analogy

🧒 Kid choosing friends to play with…

A kid meets a new child in the park and wonders:

"Is this kid friendly?"

So the kid looks at the closest few kids around the new child:

-

If most are friendly → assume the new kid is friendly.

-

If most are rude → assume the new kid is rude.

That's KNN 😄

🪢 Why is it Called "Supervised"?

Because:

-

We first "teach" it with labelled examples

(e.g., these houses are "expensive", these are "cheap"). -

Then it uses those labelled examples to predict new ones.

🔍 How KNN Works (Step-by-Step)

-

Store all the data (e.g., past houses with their prices).

-

For a new house:

-

Measure distance to every house (usually Euclidean distance).

-

-

Pick the K nearest houses (say K=3).

-

Look at their labels:

-

2 expensive

-

1 cheap

→ Majority wins → Predict expensive.

-

🎛️ What is "K" in KNN?

It's the number of neighbours you want to consult.

-

K = 1 → Just copy the closest example

-

K = 5 → Look at 5 closest examples and vote

Choosing K is like deciding:

"Should I take advice from 1 friend or 5 friends?"

👍 Advantages

-

Very simple (no brain-breaking maths)

-

Works well for small datasets

-

No training time (lazy learner)

-

Easy to understand and visualize

👎 Disadvantages

-

Slow when data is large (needs to calculate distance for every item)

-

Doesn't work well with too many features

-

Bad if data is not scaled

-

Sensitive to noisy or irrelevant points

📌 Where KNN is Used?

-

Recommend products ("People similar to you bought…")

-

Classifying emails as spam/not spam

-

Predicting diseases (based on similar patients)

-

Image recognition

-

Fraud detection

KNN Example 1

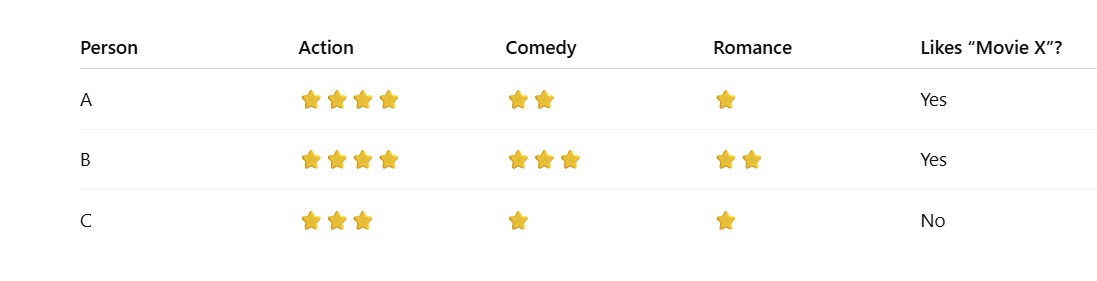

🎬 KNN Example (Choosing a Movie)

Imagine you want Netflix to recommend a movie to you.

You tell Netflix your favourite genres:

-

Action: ⭐⭐⭐⭐

-

Comedy: ⭐⭐⭐

-

Romance: ⭐

Now Netflix wants to find a movie you will like, even though you haven't rated it yet.

So what does Netflix do?

🔍 Step 1: Look for "K nearest neighbours"

Netflix searches for people who have movie tastes similar to yours.

Example:

Netflix finds 3 people (K=3):

🧮 Step 2: Calculate distance

It checks whose preferences are closest to yours:

You → (4, 3, 1)

Neighbour A → (4, 2, 1)

Neighbour B → (4, 3, 2)

Neighbour C → (3, 1, 1)

A and B are very close → C is a bit far.

🗳️ Step 3: Voting

Out of the 3 neighbours:

-

2 said "YES"

-

1 said "NO"

Majority wins → Predict: You'll like Movie X

So Netflix recommends Movie X to you.

KNN Example 2

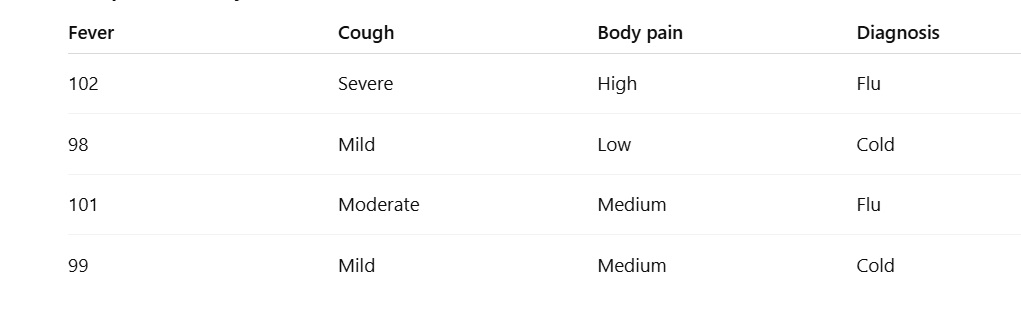

✅ 2. Medical Example (Health Diagnosis)

A doctor wants to know whether a patient has flu or just a common cold.

Patient's symptoms:

-

Fever: 101°F

-

Cough: Moderate

-

Body pain: Medium

Hospital already has stored data:

✔ Step 1: Calculate similarity

Check which past patients had similar fever/cough/body pain.

The 3 closest past patients (K=3) are:

-

Flu

-

Flu

-

Cold

✔ Step 2: Vote

Flu = 2

Cold = 1

Prediction: The patient likely has Flu.

KNN works like a doctor comparing new patients to old patient records.

KNN Example 3

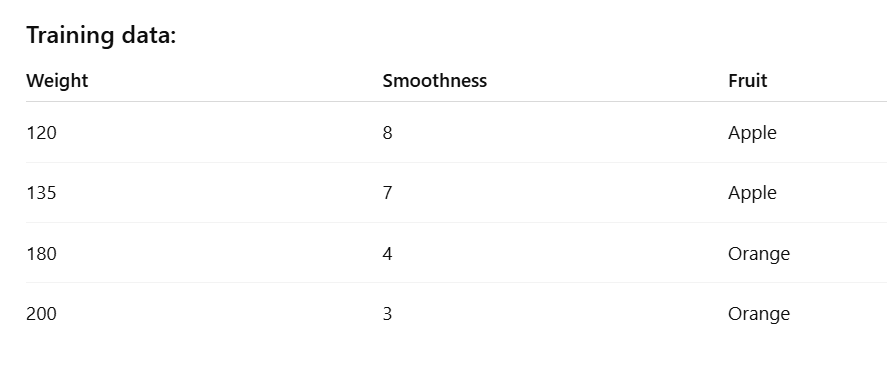

Numeric Dataset Example (Simple & Clear)

Say we want to predict whether a fruit is an Apple or Orange based on:

-

Weight (grams)

-

Smoothness (scale 1–10)

New fruit:

Weight = 150g, Smoothness = 6

✔ Step 1: Measure distance

The closest points to (150, 6) are:

-

135, 7 → Apple

-

120, 8 → Apple

-

180, 4 → Orange

(K = 3)

✔ Step 2: Vote

Apple = 2

Orange = 1

Prediction: Apple

KNN Example 4

Regression Example (Predicting a NUMBER)

This time KNN predicts not a label, but a value.

🎯 Example: Predicting house price

We want to estimate the price of a new house (size = 1200 sq ft).

✔ Step 1: Find the 3 nearest

The closest sizes to 1200 are:

-

1100 → 55 lakh

-

1000 → 50 lakh

-

1300 → 70 lakh

✔ Step 2: Take average (because regression)

Average = (50 + 55 + 70) / 3

= 58.33 lakh

✔ Predicted Price: ~58.3 lakh

MCQs on KNN (Supervised Machine Learning)

1. KNN is mainly based on which idea?

A. Looking at past neighbours

B. Making a mathematical model

C. Training a deep neural network

D. Random guessing

Answer: A

2. In KNN, "K" stands for:

A. Number of features

B. Number of neighbours to consider

C. Number of layers

D. Number of outputs

Answer: B

3. KNN belongs to which type of learning?

A. Reinforcement learning

B. Unsupervised learning

C. Supervised learning

D. Self-learning

Answer: C

4. KNN makes predictions based on:

A. Majority vote of nearest neighbours

B. Training a heavy model

C. Random forest

D. Cross-validation only

Answer: A

5. KNN is called a "lazy learner" because:

A. It doesn't work

B. It trains very slowly

C. It stores data and only works when needed

D. It sleeps during training

Answer: C

6. What happens if K = 1?

A. Prediction is based on farthest neighbour

B. Prediction is based only on the single closest neighbour

C. Model becomes unsupervised

D. KNN stops working

Answer: B

7. Increasing K generally makes predictions:

A. More stable

B. More random

C. Always worse

D. Faster

Answer: A

8. If K is too small (like 1 or 2), KNN may:

A. Improve accuracy

B. Overfit

C. Underfit

D. Stop learning

Answer: B

9. If K is too large (like 50), KNN may:

A. Overfit

B. Underfit

C. Become a neural network

D. Ignore all neighbours

Answer: B

10. KNN requires which metric?

A. Distance

B. Momentum

C. Convolution

D. Reinforcement signal

Answer: A

11. A common distance measure in KNN is:

A. Euclidean distance

B. Manhattan taxi fare

C. Logarithmic distance

D. Quantum distance

Answer: A

12. KNN works best when data is:

A. Unscaled

B. Scaled/normalized

C. Missing values only

D. High-dimensional

Answer: B

13. What is a disadvantage of KNN?

A. Requires model building

B. Fast with huge datasets

C. Slow when dataset is large

D. Works only for images

Answer: C

14. KNN can be used for:

A. Classification only

B. Regression only

C. Both classification and regression

D. Neither

Answer: C

15. In regression, KNN predicts value by:

A. Majority vote

B. Average of nearest neighbours

C. Random value

D. Using logistic function

Answer: B

16. KNN stores:

A. Only model parameters

B. All training data

C. No data

D. Only labels

Answer: B

17. Which problem can KNN struggle with?

A. Small datasets

B. Many irrelevant features

C. Clean data

D. Labeled data

Answer: B

18. KNN is sensitive to:

A. Data scaling

B. Weather

C. Operating system

D. Email clients

Answer: A

19. KNN is best described as:

A. Memory-based

B. Model-based

C. Rule-based

D. Architecture-based

Answer: A

20. When two classes are very close to each other, KNN may:

A. Perform poorly

B. Always predict perfectly

C. Ignore them

D. Merge the classes

Answer: A

21. In KNN, why does a very small value of K usually result in high variance?

A. Because distance calculation is ignored

B. Because the model becomes too simple

C. Because predictions depend heavily on individual data points

D. Because training data is reduced

Answer: C – With small K, even noise or outliers strongly influence prediction, increasing variance.

22. Which situation most strongly causes KNN performance to degrade?

A. Small dataset

B. High dimensional feature space

C. Balanced classes

D. Normalized data

Answer: B – In high dimensions, distance measures lose meaning (curse of dimensionality).

23. In KNN, feature scaling is important because:

A. KNN uses gradient descent

B. Features with larger ranges dominate distance calculation

C. Scaling reduces memory usage

D. Scaling reduces training time

Answer: B – Distance-based methods are biased toward features with larger numeric ranges.

24. Which distance metric is MOST appropriate when features are binary (0/1)?

A. Euclidean distance

B. Manhattan distance

C. Hamming distance

D. Cosine similarity

Answer: C – Hamming distance counts mismatches, ideal for binary features.

25. If K equals the total number of training samples, KNN will behave like:

A. Nearest centroid classifier

B. Random classifier

C. Majority class classifier

D. Linear regression

Answer: C – All points vote, so the majority class always wins (underfitting).

26. Which statement about KNN training phase is correct?

A. Training is computationally expensive

B. Training builds a parametric model

C. Training simply stores the data

D. Training involves backpropagation

Answer: C – KNN has no explicit training; it memorizes the dataset.

27. Why is KNN referred to as a non-parametric algorithm?

A. It has no hyperparameters

B. It assumes no fixed data distribution

C. It does not require labels

D. It always uses Euclidean distance

Answer: B – KNN makes no assumption about the underlying data distribution.

28. In KNN regression, prediction is typically obtained by:

A. Majority vote

B. Median of neighbours

C. Weighted or unweighted average

D. Maximum likelihood

Answer: C – Regression predicts numeric values using averages of neighbours.

29. Weighted KNN differs from standard KNN because:

A. It uses fewer neighbours

B. Closer neighbours have more influence

C. It ignores distance

D. It eliminates noise completely

Answer: B – Weights are assigned inversely proportional to distance.

30. Which of the following helps reduce overfitting in KNN?

A. Smaller K

B. Larger K

C. No scaling

D. Using K = 1

Answer: B – Larger K smooths decision boundaries, reducing sensitivity to noise.

31. What is the primary computational cost in KNN?

A. Model training

B. Feature extraction

C. Distance computation at prediction time

D. Parameter optimization

Answer: C – KNN calculates distance from the test point to all training points.

32. Curse of dimensionality mainly affects KNN because:

A. Memory usage increases

B. Data becomes sparse and distances become less meaningful

C. Labels are lost

D. Noise disappears

Answer: B – In high dimensions, all points appear similarly distant.

33. Which technique is commonly used to select the optimal value of K?

A. Gradient descent

B. Bootstrapping

C. Cross-validation

D. Forward propagation

Answer: C – Cross-validation evaluates performance for different K values.

34. KNN is most likely to fail when:

A. Classes overlap heavily

B. Dataset is small

C. Data is well separated

D. Features are normalized

Answer: A – Overlapping classes confuse distance-based classification.

35. Compared to decision trees, KNN is:

A. Faster at prediction time

B. More interpretable

C. Slower at prediction time

D. Parametric

Answer: C – KNN computes distances at prediction, unlike pre-built trees.

36. If two classes are highly imbalanced, KNN tends to:

A. Prefer minority class

B. Prefer majority class

C. Ignore distance

D. Become unsupervised

Answer: B – Majority class dominates voting unless weighting is used.

37. Which improvement helps KNN scale better to large datasets?

A. Increasing K

B. Dimensionality reduction

C. Removing labels

D. Using deeper models

Answer: B – Techniques like PCA reduce dimensions and computation cost.

38. KNN decision boundaries are generally:

A. Linear

B. Fixed

C. Non-linear and irregular

D. Parametric

Answer: C – Boundaries adapt to data distribution and can be complex.

39. Which statement about bias-variance trade-off in KNN is correct?

A. Small K → high bias, low variance

B. Large K → high variance, low bias

C. Small K → low bias, high variance

D. K has no effect on bias or variance

Answer: C – Small K fits data closely (low bias) but is sensitive to noise (high variance).

40. In real-time systems, KNN is often avoided because:

A. It is inaccurate

B. It requires frequent retraining

C. Prediction latency is high

D. It cannot handle labels

Answer: C – Distance calculations at inference time are computationally expensive.