Reinforcement Learning Essentials

Reinforcement Learning

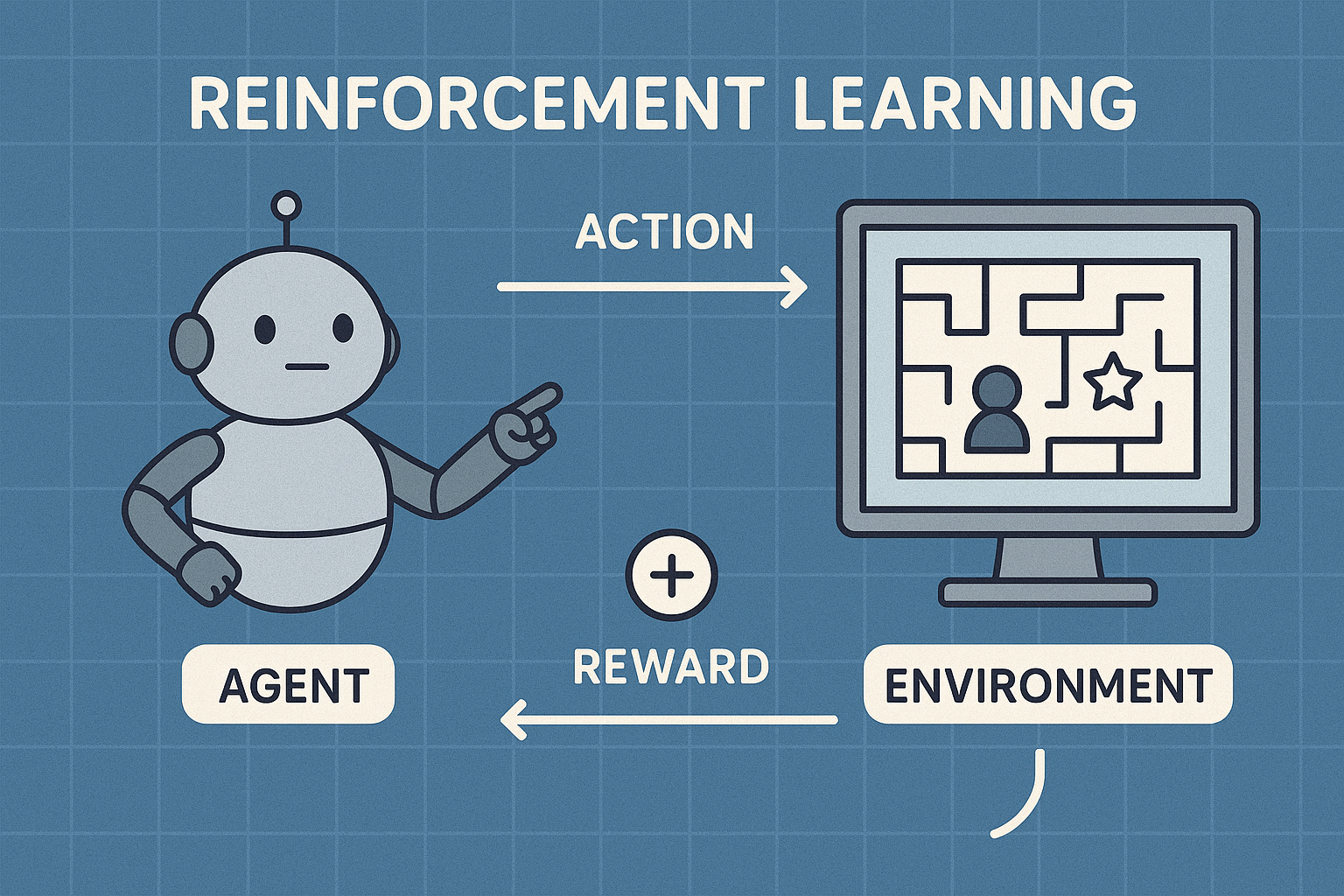

Reinforcement learning (RL) is a branch of machine learning where an agent learns to make decisions by interacting with an environment. Instead of learning from fixed examples, the agent receives rewards or penalties for its actions and gradually discovers which strategies lead to better long-term outcomes. RL is widely used in robotics, game playing, recommendation systems, and real-world optimization problems where sequential decision-making is crucial.

Key ideas in reinforcement learning include:

- State – the situation the agent observes

- Action – what the agent can do

- Reward – feedback signal for each action

- Policy – the agent’s strategy for choosing actions

- Value – expected long-term return from states or actions

Modern RL combines ideas from control theory, dynamic programming, and deep learning. Algorithms such as Q-learning, policy gradients, and actor–critic methods allow agents to learn complex behaviors directly from raw inputs like images or sensor data. Training often involves exploration–exploitation trade-offs, where the agent must balance trying new actions with using what it already knows works well.

Because RL focuses on learning through trial and error, it is especially powerful in environments that are too complex to model explicitly. From autonomous vehicles to industrial process control, reinforcement learning provides a flexible framework for building systems that improve their performance over time through experience.

In simple terms:

Actions that lead to favorable outcomes are reinforced, while actions leading to unfavorable outcomes are discouraged.

Example 1: Learning to Ride a Bicycle

When a child learns to ride a bicycle, the learning process closely resembles reinforcement learning:

Maintaining balance and moving forward results in success and satisfaction

Losing balance results in falling and discomfort

Through repeated attempts, the child gradually learns the optimal way to balance and pedal.

Positive outcome (reward): Smooth and stable movement

Negative outcome (penalty): Falling or instability

No formal instruction is required at every step; learning occurs through experience and feedback.

Example 2: Selecting the Optimal Route to the Workplace

An individual commuting to work may experiment with multiple routes:

Route A consistently results in congestion and delays

Route B results in a faster and smoother commute

Over time, the individual adopts Route B as the preferred choice.

This decision-making process is guided by observed outcomes rather than predefined rules.

Example 3: Training a Pet Using Rewards

Pet training provides a classical illustration of reinforcement learning principles:

Desired behavior (e.g., sitting on command) is rewarded

Undesired behavior receives no reward

Through repeated reinforcement, the pet associates the correct action with a positive outcome and modifies its behavior accordingly.

A Simple Mathematical Example of Reinforcement Learning

Scenario: Learning the Best Route to Office

Assume you travel to office every day and you can choose between two routes:

-

Route A (often has traffic)

-

Route B (usually smooth)

Your objective is to reach office on time, which we represent using numerical rewards.

Step 1: Define Rewards

-

If you reach office on time, you receive a reward of +10

-

If you get stuck in traffic, you receive a penalty of –5

Step 2: Initial Knowledge

At the beginning, you have no prior experience.

So your expected reward (Q-value) for both routes is 0.

Q(A)=0, Q(B)=0

Step 3: Learning Formula (Q-Learning Rule)

Each time you choose a route, you update its value using:

Qnew=Qold+α×(Reward−Qold)

Assume the learning rate:

α=0.5

Step 4: Day 1 – You Choose Route A

Route A has traffic, so the reward is –5.

Q(A)=0+0.5×(−5−0)

Q(A)=−2.5

Your belief about Route A becomes negative.

Step 5: Day 2 – You Choose Route B

Route B is smooth, so the reward is +10.

Q(B)=0+0.5×(10−0)

Q(B)=5

Your belief about Route B improves significantly.

Step 6: Day 3 – You Choose Route B Again

Since Route B has a higher expected value, you choose it again.

It again gives a reward of +10.

Q(B)=5+0.5×(10−5)

Q(B)=7.5

Step 7: Final Decision

Now you have learned:

Q(A)=−2.5, Q(B)=7.5

Since Route B has a much higher value, you will consistently choose Route B in the future.

Reinforcement Learning and Goal-Oriented Systems

A goal-oriented system is a system that is built to achieve a specific objective.

It observes the situation, takes actions, and checks whether those actions are helping it move closer to its goal.

For example, a navigation app has one goal: reach the destination.

Every decision it makes—turn left, turn right, or go straight—is aimed at achieving that goal.

How Reinforcement Learning Fits In

Reinforcement learning is a method that allows a system to learn how to achieve a goal by experience, rather than by fixed rules.

The system is not told the best action in advance.

Instead, it tries different actions and learns from the results.

-

If an action helps achieve the goal, it receives a positive reward

-

If an action moves it away from the goal, it receives a negative reward

Over time, the system learns which actions are best.

Simple Goal-Oriented Example

Imagine a robot whose goal is to reach a charging station.

-

When the robot moves closer to the charger, it gets a small reward

-

When it moves away, it gets a penalty

-

When it reaches the charger, it gets a big reward

The robot does not know the path at first.

By trying different movements and observing rewards, it slowly learns the best path.

This is reinforcement learning creating a goal-oriented behavior.

Why Rewards Represent Goals

In reinforcement learning, the goal is not written as a sentence.

It is written as a reward function.

The system does not think in words like "reach safely" or "be fast."

It thinks in numbers:

-

Higher numbers mean "good"

-

Lower numbers mean "bad"

By maximizing the total reward, the system automatically works toward its goal.

Multi-Step Goal Achievement

Many goals cannot be achieved in one step.

For example, reaching office requires:

-

Choosing a route

-

Driving carefully

-

Avoiding traffic

Reinforcement learning allows the system to understand that:

-

Some actions may not give an immediate reward

-

But they help achieve a better result later

This ability to consider future rewards is what makes reinforcement learning suitable for goal-oriented systems.

Learning a Strategy (Policy)

Over time, the system develops a strategy for achieving its goal.

This strategy answers the question:

"What should I do in this situation to get the best long-term result?"

In reinforcement learning, this strategy is called a policy.

A good policy means the system consistently takes actions that move it toward its goal.

Why Reinforcement Learning is Ideal for Goal-Oriented Systems

Reinforcement learning works best when:

-

The goal is long-term

-

The environment changes

-

The best actions are not known in advance

-

Decisions must be made step by step

These are exactly the conditions of most real-world goal-oriented systems.

MCQs: Reinforcement Learning & Goal-Oriented Systems

1. Reinforcement learning is best described as a system that learns by

A. Using labeled datasets

B. Following predefined rules

C. Interacting with an environment and receiving feedback

D. Memorizing past data

✅ Answer: C

Explanation:

Reinforcement learning learns by trial and error, using rewards and penalties as feedback.

2. In reinforcement learning, the "goal" of the system is represented by

A. Training data

B. Reward function

C. Neural network

D. Action space

✅ Answer: B

Explanation:

The reward function numerically encodes the goal. Maximizing reward means achieving the goal.

3. Which component decides what action to take in a goal-oriented RL system?

A. Environment

B. Reward

C. Agent

D. State

✅ Answer: C

Explanation:

The agent is the decision-maker that selects actions to achieve the goal.

4. A system that aims to maximize long-term reward rather than immediate reward is an example of

A. Supervised learning

B. Rule-based system

C. Reinforcement learning

D. Unsupervised learning

✅ Answer: C

Explanation:

Reinforcement learning focuses on cumulative (long-term) reward, not just immediate outcomes.

5. Which of the following best defines a goal-oriented system?

A. A system that stores data

B. A system that follows fixed instructions

C. A system that selects actions to achieve a specific objective

D. A system that only predicts outcomes

✅ Answer: C

Explanation:

A goal-oriented system continuously chooses actions that help it reach a desired objective.

6. In reinforcement learning, a policy is

A. A database of rewards

B. A rule for updating Q-values

C. A strategy that maps states to actions

D. A measure of accuracy

✅ Answer: C

Explanation:

A policy tells the agent what action to take in a given state to achieve its goal.

7. Why is reinforcement learning suitable for goal-oriented problems?

A. Goals never change

B. Rewards are always immediate

C. Decisions affect future outcomes

D. Labels are available

✅ Answer: C

Explanation:

RL handles sequential decisions where actions have delayed consequences.

8. If a system receives +10 for success and –10 for failure, these values represent

A. States

B. Actions

C. Rewards

D. Policies

✅ Answer: C

Explanation:

Numerical feedback guiding learning is called a reward.

9. What happens if a reward function is poorly designed?

A. The system stops learning

B. The system may learn unintended behavior

C. The system becomes supervised

D. The system ignores the goal

✅ Answer: B

Explanation:

Incorrect rewards can cause goal misalignment, where the system optimizes the wrong behavior.

10. Which learning paradigm does NOT require labeled input-output pairs?

A. Supervised learning

B. Reinforcement learning

C. Regression

D. Classification

✅ Answer: B

Explanation:

Reinforcement learning relies on rewards, not labeled datasets.

11. In a goal-oriented RL system, what does "maximizing cumulative reward" mean?

A. Maximizing one-time reward

B. Maximizing reward at each step

C. Maximizing total reward over time

D. Maximizing accuracy

✅ Answer: C

Explanation:

The objective is to optimize overall long-term success, not short-term gains.

12. Which real-world problem is best modeled as a goal-oriented reinforcement learning task?

A. Email spam classification

B. Image labeling

C. Robot navigation

D. Data sorting

✅ Answer: C

Explanation:

Robot navigation involves sequential decisions and a clear goal, making it ideal for RL.

13. The agent learns the best sequence of actions by

A. Memorizing all states

B. Maximizing random behavior

C. Repeating actions with higher rewards

D. Following expert rules

✅ Answer: C

Explanation:

Actions that lead to higher rewards are reinforced and repeated.

14. Which statement is TRUE about reinforcement learning and goal-oriented systems?

A. Goals are written as natural language instructions

B. Goals are represented using rewards

C. Goals are ignored during learning

D. Goals require labeled data

✅ Answer: B

Explanation:

In RL, goals are mathematically represented through reward functions.

15. A navigation system that learns better routes over time using feedback is an example of

A. Rule-based AI

B. Supervised learning

C. Goal-oriented reinforcement learning

D. Unsupervised clustering

✅ Answer: C

Explanation:

The system improves routes by learning from experience, which is reinforcement learning.

💡 Remember:

Goal-oriented system = "What should I achieve?"

Reinforcement learning = "How do I learn to achieve it?"