Association Rules in Unsupervised Learning

Association Rules in Unsupervised Machine Learning

Association rule learning is an unsupervised machine learning technique used to discover interesting relationships, patterns, and co-occurrences within large datasets. Instead of predicting a specific target label, it explores how items or events tend to appear together, making it especially popular in market basket analysis, recommendation systems, and customer behavior insights. Key concepts include support (how frequently items appear together), confidence (how often a rule is true), and lift (how much stronger the rule is than random chance). Algorithms like Apriori and FP-Growth efficiently search through vast combinations of items to find rules that are both statistically significant and practically useful.

In practice, association rules help organizations understand which products are frequently bought together, which features users tend to use in combination, or which events commonly follow one another. This insight can drive smarter product bundling, cross-selling strategies, and personalized recommendations. Because it is unsupervised, the method does not require labeled data; instead, it relies on exploring raw transactional or event logs. Careful tuning of minimum support and confidence thresholds is essential to filter out noise and focus on the most meaningful patterns. When applied thoughtfully, association rule learning transforms complex, high-volume data into clear, actionable knowledge for decision-makers.

Association Rule Mining: A Complete Guide with Examples & MCQs

Association Rule Mining is one of the most powerful techniques in data mining and business analytics. It helps organizations discover hidden patterns, relationships, and correlations in large datasets — especially in transactional data.

If you've ever seen:

-

"Customers who bought X also bought Y"

-

Product bundling suggestions on Amazon

-

Supermarket combo offers

You've witnessed Association Rule Mining in action.

1. What is Association Rule Mining?

Association Rule Mining is a data mining technique used to discover interesting relationships (associations) between variables in large datasets.

It answers questions like:

-

Which products are frequently purchased together?

-

What services are commonly used together?

-

What patterns exist in customer behavior?

It is widely used in:

-

Retail analytics

-

E-commerce recommendation systems

-

Healthcare data analysis

-

Fraud detection

-

Cybersecurity event correlation

2. Key Terminology in Association Rules

2.1 Itemset

A collection of one or more items.

Example:

-

{Milk, Bread}

-

{Laptop, Mouse}

2.2 Support

Support measures how frequently an itemset appears in the dataset.

Support(A)=Number of transactions containing A/Total number of transactions

Example:

If Milk appears in 4 out of 10 transactions:

Support(Milk) = 4/10 = 0.4

2.3 Confidence

Confidence measures how often items in Y appear in transactions that contain X.

Confidence(X→Y)=Support(X∪Y)/Support(X)

It represents the conditional probability:

P(Y | X)

2.4 Lift

Lift measures how much more likely Y is purchased when X is purchased.

Lift(X→Y)=Confidence(X→Y)/Support(Y)

-

Lift > 1 → Positive association

-

Lift = 1 → Independent

-

Lift < 1 → Negative association

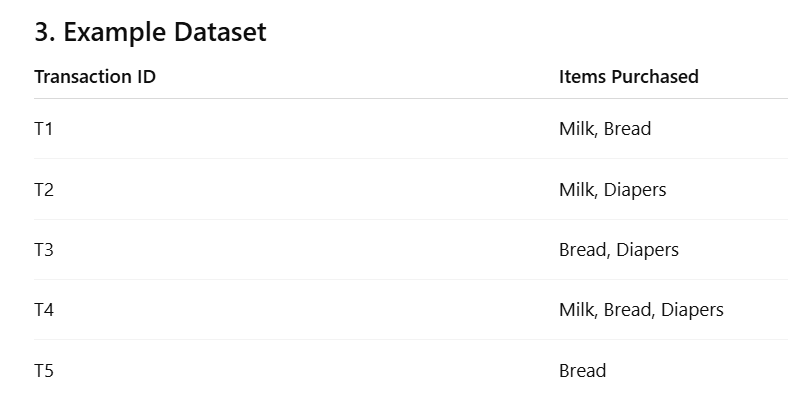

Let's calculate:

-

Support(Milk) = 3/5 = 0.6

-

Support(Bread) = 4/5 = 0.8

-

Support(Milk, Bread) = 2/5 = 0.4

Confidence(Milk → Bread) = 0.4 / 0.6 = 0.67

Lift = 0.67 / 0.8 = 0.84

Since Lift < 1, Milk and Bread are weakly negatively associated in this dataset.

🛒 Imagine a Small Grocery Store

Suppose 100 customers visit a shop today.

We want to understand shopping patterns.

✅ 1. What is SUPPORT? (Very Simple)

👉 Support means: How common is something?

If 40 out of 100 people bought Milk,

Then:

-

Milk is bought by 40% of customers.

-

So, Support of Milk = 40%

🔹 In simple words:

Support tells us how popular an item (or combination) is.

🧺 Example 1: Single Item

100 customers

-

40 bought Milk

Support (Milk) = 40%

That's it. Just popularity.

🧺 Example 2: Two Items Together

Out of 100 customers:

-

30 bought both Milk and Bread together.

Support (Milk & Bread) = 30%

Meaning: 30% of customers buy them together.

💡 So Support = "How frequently does this happen?"

It does NOT tell us whether one causes the other.

It only tells us how common it is.

🚀 2. What is LIFT? (Very Easy Meaning)

👉 Lift answers this question:

"Are two items really connected, or is it just a coincidence?"

🧠 Think Like This:

If:

-

40% buy Milk

-

50% buy Bread

Even randomly, some people will buy both.

So if we see 30% buying both —

Is that special? Or just normal?

Lift tells us that.

🎯 Lift Meaning in Very Simple Words

Lift checks:

👉 "Do people buy Bread MORE OFTEN when they buy Milk?"

📊 Simple Example

Out of 100 customers:

-

40 buy Milk

-

50 buy Bread

-

30 buy both

Now think logically:

If buying Milk had no effect,

Then about 40% × 50% = 20% should buy both randomly.

But we see 30% buying both.

30% is more than 20%.

That means:

Milk and Bread have a real connection.

So:

👉 Lift is greater than 1

👉 There is a positive relationship

4. Apriori Algorithm

The most famous algorithm for association rule mining is:

Apriori Algorithm

Principle:

If an itemset is frequent, all its subsets must also be frequent.

Steps:

-

Generate frequent 1-itemsets

-

Generate candidate 2-itemsets

-

Prune using minimum support

-

Repeat until no more frequent itemsets

-

Generate rules using confidence threshold

🛒 Example 1: Small Grocery Store

You check 10 customer bills.

Bills:

-

Milk, Bread

-

Milk, Bread

-

Milk

-

Bread

-

Milk, Bread

-

Milk

-

Bread

-

Milk, Eggs

-

Bread

-

Milk, Bread

✅ Step 1: Count Single Items

-

Milk → 7 times

-

Bread → 7 times

-

Eggs → 1 time

Now you decide:

👉 "I only care about items bought at least 3 times."

So:

-

Milk ✅

-

Bread ✅

-

Eggs ❌ (too rare, remove)

✅ Step 2: Make Pairs (Only from Popular Items)

Now only check:

Milk & Bread

How many times?

It appears 4 times.

Since 4 ≥ 3 → keep it.

🚀 Final Result

Apriori says:

Popular items:

-

Milk

-

Bread

-

Milk & Bread together

Eggs was removed early.

✔ Saved time

✔ Ignored weak items

✔ Focused on strong patterns

🍿 Example 2: Movie Theatre

You observe 8 customers.

-

Popcorn, Drink

-

Popcorn

-

Drink

-

Popcorn, Drink

-

Popcorn

-

Drink

-

Popcorn, Drink

-

Nachos

Step 1: Count Single Items

-

Popcorn → 5

-

Drink → 5

-

Nachos → 1

Minimum requirement = 2

So:

-

Popcorn ✅

-

Drink ✅

-

Nachos ❌

Step 2: Check Pair

Popcorn & Drink → 3 times

3 ≥ 2 → Keep

Final Pattern

People often buy:

Popcorn + Drink

So theatre can create:

🎯 Combo Offer

🏥 Example 3: Hospital Case

10 patients:

-

6 have Fever

-

6 have Cough

-

5 have both Fever & Cough

-

1 has Headache only

Minimum requirement = 3

Headache ❌ removed

Now check Fever & Cough

Appears 5 times → Strong pattern

Hospital learns:

👉 Fever + Cough often occur together.

💻 Example 4: Online Shopping

Out of 20 customers:

-

12 buy Laptop

-

10 buy Mouse

-

8 buy Laptop & Mouse

-

2 buy Printer

Minimum support = 5

Printer ❌ removed

Laptop & Mouse → 8 times → strong

E-commerce site creates:

"Buy Laptop + Mouse Combo"

🧠 Why Apriori Is Smart

Instead of checking:

Laptop + Printer + Mouse

Milk + Eggs + Butter

It removes weak items first.

So it never wastes time.

5. Applications of Association Rule Mining

1. Market Basket Analysis

Identify products frequently purchased together.

2. Recommendation Systems

Amazon-style product recommendations.

3. Healthcare

Discover disease-drug relationships.

4. Cybersecurity

Detect unusual event combinations (useful in SIEM analysis).

5. Fraud Detection

Find suspicious transaction patterns.

6. Advantages

-

Easy to interpret

-

Unsupervised learning method

-

Highly useful in retail analytics

-

Scalable with algorithms like FP-Growth

7. Limitations

-

Can generate too many rules

-

High computational cost

-

Requires careful threshold tuning

-

May produce misleading rules without lift

MCQs on Association Rule Mining (With Answers & Explanations)

Q1.

In a store of 100 customers, 40 buy Milk.

What is the support of Milk?

A. 100%

B. 60%

C. 40%

D. Cannot say

Answer: C

Explanation:

Support means how common it is.

40 out of 100 = 40%.

Q2.

Out of 100 customers, 30 buy both Milk and Bread.

What does this 30% represent?

A. Lift

B. Confidence

C. Support of Milk

D. Support of Milk & Bread

Answer: D

Explanation:

It shows how common both items together are.

Q3.

If 40% buy Milk and 50% buy Bread, some people will buy both even by chance.

Lift helps us understand:

A. Popularity

B. Discount percentage

C. Whether the connection is real or coincidence

D. Store profit

Answer: C

Explanation:

Lift checks if items are truly connected.

Q4.

If Lift = 1, it means:

A. Strong connection

B. No connection (just coincidence)

C. Negative relationship

D. Very popular items

Answer: B

Explanation:

Lift = 1 means no special relationship.

Q5.

If Lift is greater than 1, it means:

A. Items avoid each other

B. Items are independent

C. Items are positively connected

D. Items are rare

Answer: C

Explanation:

Lift > 1 means strong positive connection.

Q6.

If Lift is less than 1, it means:

A. Items are strongly connected

B. Items are negatively related

C. Items are very popular

D. Items are identical

Answer: B

Explanation:

Lift < 1 means customers avoid buying them together.

Q7.

In a movie theatre:

60% buy Popcorn

70% buy Cold Drink

55% buy both

If most popcorn buyers also buy drinks, Lift will likely be:

A. Equal to 1

B. Less than 1

C. Greater than 1

D. Zero

Answer: C

Explanation:

Buying together more than expected → strong relationship.

Q8.

Support tells us:

A. Whether items influence each other

B. How frequently something happens

C. The profit margin

D. The algorithm used

Answer: B

Explanation:

Support = Popularity.

Q9.

If 80 out of 100 customers buy Tea, Tea has:

A. Low support

B. High support

C. Low lift

D. No lift

Answer: B

Explanation:

80% is very common → high support.

Q10.

Which statement is correct?

A. Support tells us relationship strength

B. Lift tells us popularity

C. Support tells popularity; Lift tells relationship strength

D. Both are same

Answer: C

Explanation:

Support = How common

Lift = How strong the connection

Q11.

If Milk and Bread are bought together exactly as expected by chance, Lift will be:

A. 0

B. 1

C. 2

D. 10

Answer: B

Explanation:

Exactly as expected → no special connection → Lift = 1.

Q12.

If two products have very high support but Lift = 1, this means:

A. They are strongly related

B. They are unrelated but popular

C. They are negatively related

D. They are rare

Answer: B

Explanation:

Both are popular, but not specially connected.

🛒 Easy MCQs on Apriori Algorithm (Based on Examples)

Q1.

In the grocery example, Eggs appeared only once. Why was it removed?

A. It was expensive

B. It was not popular enough

C. It was defective

D. It had high lift

Answer: B

Explanation:

Apriori removes items that do not meet minimum popularity (minimum support).

Q2.

In the grocery example, Milk and Bread were kept because:

A. They were cheap

B. They were bought frequently

C. They were new products

D. They had discounts

Answer: B

Explanation:

Apriori keeps only frequently bought items.

Q3.

Why did we NOT check Milk & Eggs combination?

A. Eggs were removed earlier

B. Milk was removed

C. It was too expensive

D. It had high confidence

Answer: A

Explanation:

Apriori rule: If a single item is not frequent, its combinations are not checked.

Q4.

In the movie theatre example, Nachos was removed because:

A. It was unhealthy

B. It appeared very few times

C. It had strong association

D. It was expensive

Answer: B

Explanation:

Nachos appeared only once, below minimum requirement.

Q5.

If Popcorn and Drink appear together many times, the theatre should:

A. Stop selling them

B. Separate them

C. Create a combo offer

D. Remove Drink

Answer: C

Explanation:

Apriori helps identify popular combinations for marketing.

Q6.

In the hospital example, Fever and Cough were considered important because:

A. They were rare

B. They appeared together many times

C. They had no connection

D. They were expensive treatments

Answer: B

Explanation:

Frequent co-occurrence makes them a strong pattern.

Q7.

In the online shopping example, Printer was removed because:

A. It was not profitable

B. It was rarely bought

C. It had high support

D. It was damaged

Answer: B

Explanation:

Apriori removes rare items first.

Q8.

Apriori first checks:

A. Large item combinations

B. Only pairs

C. Single items

D. Triples

Answer: C

Explanation:

Apriori always starts with single items.

Q9.

If an item is not popular individually, Apriori will:

A. Still check its combinations

B. Increase its support

C. Ignore its combinations

D. Promote it

Answer: C

Explanation:

If a single item is weak, bigger combinations with it are ignored.

Q10.

The main goal of Apriori in these examples was to:

A. Increase price

B. Find popular item combinations

C. Reduce stock

D. Predict weather

Answer: B

Explanation:

Apriori finds frequent itemsets to discover buying patterns.